With decades of experience in management consulting, Marco Gaietti is a seasoned expert in Business Management. His expertise spans a broad range of areas, including strategic management, operations, and customer relations, making him a critical voice in the conversation regarding how technology reshapes organizational structures. As major tech firms shift from mere experimentation to active enforcement of AI adoption, Gaietti provides a necessary bridge between technical implementation and human-centric leadership.

In this discussion, we explore the evolving landscape of performance reviews where AI fluency has become a core requirement. We delve into the complexities of measuring high-quality work in an automated environment, the psychological “carrots and sticks” used to drive adoption, and the legal hurdles HR departments face concerning age discrimination. Furthermore, the conversation addresses the critical gap between upskilling employees and the actual redesign of workflows to ensure that AI becomes a transformative force rather than just another digital chore.

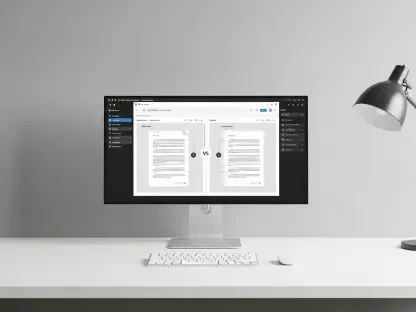

Major tech firms are now tracking AI adoption in software engineering reviews and counting AI-assisted code lines. How can managers distinguish between simple volume and high-quality strategic thinking, and what specific metrics should be used to ensure these reviews remain fair?

We are entering a phase where “quantity” can be misleading because AI allows an engineer to generate hundreds of lines of code in seconds. To move beyond simple volume, managers must implement a three-step evaluation process that prioritizes “Reviewer-in-the-Loop” metrics. First, assess the “Refinement Rate,” which measures how much an employee edits or corrects the AI’s initial output, demonstrating their critical judgment and technical oversight. Second, look at the “Strategic Alignment” of the code—asking if the AI-generated solution solves the business problem efficiently or just adds technical debt. Finally, evaluate “Peer Impact,” where 360-degree feedback confirms whether an employee’s AI use actually speeds up the team’s delivery or forces others to spend hours debugging poorly supervised machine output. This ensures that we value the human pilot who steers the machine, rather than just the speed of the engine itself.

Daily AI usage often remains below sixty percent despite widespread tool access. When companies implement competency scoring systems from one to five to incentivize use, what “carrots and sticks” prove most effective, and how do you handle employees who resist these new digital workflows?

It is a striking reality that even with tools at their fingertips, fewer than 60% of employees with access actually use AI in their daily workflow. To bridge this, the most effective “carrot” is the creation of an internal “AI Champion” program where those who reach a level four or five competency score receive visibility and the opportunity to lead cross-functional projects. The “stick” is more subtle; at firms like Conductor, it involves integrating these scores into the very core of performance reviews, making it clear that digital stagnation directly impacts promotion eligibility. For the resistors, I recommend a “low-stakes sandbox” approach where they are tasked with using AI for non-critical administrative tasks first, reducing the fear of making a high-stakes error. We have found that once an employee experiences that “aha moment” of saving two hours on a mundane task, the psychological barrier tends to dissolve naturally.

Tying performance ratings to AI proficiency can create legal risks regarding age discrimination and disparate impact. What steps should HR departments take to audit these metrics for bias, and how do you support staff in roles where AI applications are not yet fully developed?

HR departments must be extremely vigilant, as organizations like Ogletree Deakins have warned that these metrics can inadvertently penalize older workers who may have different generational comfort levels with new tech. To mitigate this, HR should conduct a “Disparate Impact Audit” every six months, comparing the AI proficiency scores across different age brackets and protected groups to ensure no one is being unfairly marginalized. In roles where AI tools are still nascent or under-resourced, we must implement “Weighted Performance Rubrics” that adjust expectations based on the actual availability of relevant tools for that specific function. It is vital to provide “safe harbor” periods where employees are judged on their willingness to learn and experiment rather than their immediate mastery. This protection ensures that the transition feels like an evolution of their career rather than an existential threat to their job security.

Most organizations prioritize upskilling current employees over hiring new talent, yet many fail to actually restructure workflows around AI capabilities. How can leadership close this gap, and what are the step-by-step requirements for successfully redesigning a role to be AI-centric?

While 83% of HR leaders believe success depends on upskilling existing staff, 84% of organizations haven’t actually changed how the work gets done, which creates a massive friction point. To close this gap, leadership must first perform a “Task Decomposition” to identify which 20% to 30% of a role’s duties are prime for AI automation, such as data entry or initial drafting. Second, they must formally redefine the job description to shift the employee’s focus toward “Output Verification” and “Creative Integration,” effectively turning them from a “doer” into a “director.” For example, a successful transition in a marketing department involves moving a copywriter from writing individual ads to managing an AI-driven content engine that generates dozens of variants, which they then curate for brand voice. This shift ensures that the employee’s institutional knowledge is the anchor, while the AI acts as the force multiplier for their specific business context.

What is your forecast for AI integration in the workplace?

I predict that by 2026, the “AI Skills Gap” will become the primary reason for turnover as employees gravitate toward companies that offer the best “AI-Human Hybrid” environments. We will see a shift where “AI Fluency” is no longer a niche skill for software engineers but a baseline requirement for every role, similar to how Microsoft Office became mandatory decades ago. However, the real winners will be the organizations that realize that technology is only as good as the culture that adopts it. My advice for readers is to stop viewing AI as a replacement for human talent and start treating it as a new colleague that requires a specific set of management skills. If you focus on building “role-specific learning” pathways today, you won’t just be keeping up with the pace of change—you will be the one setting it.