With decades of experience in management consulting, Marco Gaietti is a seasoned expert in Business Management. His expertise spans a broad range of areas, including strategic management, operations, and customer relations, making him a vital voice in the current debate regarding the integration of artificial intelligence in the workplace. In this discussion, he explores the critical differences between human talent and AI agents, challenging the trend of personifying software and highlighting the evolving responsibilities of the modern workforce.

The following conversation examines the nuances of supervising AI tools, the shifting nature of job descriptions, and the long-term resource planning required to manage these sophisticated yet fundamentally limited digital assets.

While some people view AI agents as colleagues, these tools lack social needs like birthdays, office culture, or personal recognition. How does this lack of human engagement affect team dynamics, and what practical steps should leaders take to maintain a clear distinction between software and teammates?

The most significant danger in treating an AI agent like a colleague is the erosion of actual human culture. When we personify these tools, we risk devaluing the emotional labor and social bonds that human employees bring to the table, such as participating in a March Madness pool or buying Girl Scout cookies from a coworker. To maintain a healthy dynamic, leaders must explicitly categorize AI as a tool—much like a computer or a search engine—rather than a team member with a personality. We aren’t dealing with a sentient being like Commander Data from Star Trek; we are dealing with a marketing workflow or a search interface. Practical steps include focusing recognition exclusively on the human who managed the tool and ensuring that “social” time, like happy hour, remains a strictly human-only zone to reinforce those vital interpersonal connections.

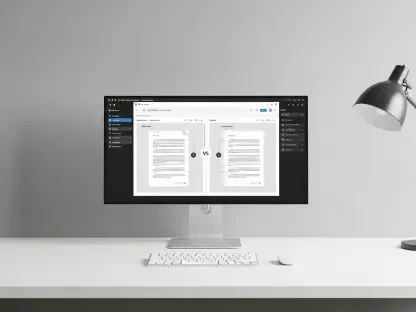

Using AI often turns a traditional employee into a “programmer” who doesn’t need coding skills but must constantly refine prompts for better results. What are the long-term implications for standard job descriptions, and can you provide an anecdote showing how this oversight changes a typical daily workflow?

Job descriptions are shifting from task execution to high-level oversight and “prompt engineering,” which essentially makes every employee a supervisor of digital output. For instance, consider a marketing professional who previously spent hours manually scouring TV, print, and radio to find a competitor’s advertisements. Today, that same employee might ask an AI to find Ford’s competitors’ truck ads, but the first pass is often incomplete or includes irrelevant data. The daily workflow is no longer about the “search” itself, but about the repetitive cycle of refining the prompt to include internet ads or better define what counts as a “truck” in a changing market. This turns the role into one of constant monitoring and corrective action, requiring a deep understanding of the subject matter to catch the subtle errors the AI misses.

AI agents are often unable to distinguish between high-quality and poor-quality outputs without human intervention. In scenarios where answers aren’t just right or wrong but vary in effectiveness, what specific monitoring steps are required, and how do you prevent the human supervisor from becoming overwhelmed?

When responses exist on a continuum of “good to bad” rather than a binary “right or wrong,” the supervisor must establish a rigorous feedback loop that defines clear parameters for quality. This involves constantly reviewing the AI’s output against current external information and adjusting the prompts to narrow the margin of error. To prevent burnout, companies must recognize that these agents are essentially “dumb” employees that cannot self-correct or identify their own mistakes. We prevent overwhelm by ensuring the human supervisor isn’t just a passive observer, but is empowered with the time and authority to treat the AI as a high-maintenance tool. The goal is to set the expectation that the agent is there to assist the human, not to replace the human’s critical thinking or quality control duties.

Traditional management focuses on performance appraisals and goal-setting for humans, yet AI tools are now embedded in critical workflows. How should accountability be assigned when an agent fails a task, and what metrics would you use to measure the effectiveness of the person supervising that AI?

Accountability must always rest with the human operator because the AI agent is incapable of caring about its performance or understanding the stakes of its failure. We don’t set goals for the software; we set goals for the employee who is leveraging that software to achieve a business outcome. If an agent fails a task, the “performance appraisal” is conducted on the human’s ability to monitor, catch, and correct that failure before it impacts the organization. Effective metrics would include the accuracy of the final output after human intervention and the speed at which the employee can refine the AI’s prompts to reach an acceptable standard. Ultimately, we evaluate the person on how well they manage their “digital subordinate,” ensuring the tool’s limitations don’t become the company’s liabilities.

Since AI agents do not improve on their own and require significant effort to switch between different types of tasks, how should companies approach long-term resource planning? What are the trade-offs between hiring more staff and investing in the constant maintenance these “dumb” tools require?

Long-term planning requires a sobering realization: AI is not a “set it and forget it” solution, and shifting it to a new task involves a massive amount of data and manual reconfiguration. Companies face a trade-off between the perceived efficiency of automation and the very real cost of the human labor required to maintain it. While you might save on headcount for low-level data entry, you must invest heavily in skilled staff who can handle the constant monitoring and prompt adjustments needed to keep the AI relevant. Resource planning should focus on the “total cost of ownership,” which includes the human hours spent supervising these tools, rather than assuming the software will magically scale productivity without oversight. If you aren’t prepared for the “ton of work” required to switch an agent’s task, you may find that traditional hiring is actually more flexible for diverse, evolving business needs.

What is your forecast for AI agents?

I expect the initial “honeymoon phase” of treating AI agents as magical collaborators to fade as leaders realize these tools are effectively extremely dumb employees that lack any capacity for self-improvement. My forecast is that we will see a shift back to fundamental management principles where the focus returns to the human being behind the screen. We will stop trying to integrate AI into office culture and start treating it with the same clinical distance we afford to a spreadsheet or a search engine. Success will belong to the organizations that stop inviting their software to “happy hour” and instead double down on training their people to be the rigorous, critical supervisors that these “dumb” tools so desperately require.