The rapid integration of sophisticated neural networks into every layer of global infrastructure has finally forced a confrontation with the fundamental paradox of technological sovereignty: who precisely is tasked with guarding the digital guardians? As these systems transition from mere novelties to essential utilities, the mechanisms designed to monitor them are undergoing a profound transformation. This evolution is no longer just a technical necessity but a socio-technical imperative that determines the level of trust society places in automated decision-making.

The Evolution and Principles of AI Governance

Governance in the machine learning sector has shifted from retroactive debugging to proactive ethical architecture. In the early stages of development, oversight was often an afterthought, consisting of rudimentary filters and keyword blocks. Today, the focus has pivoted toward comprehensive frameworks that integrate safety protocols directly into the training phase. This shift reflects a broader understanding that the behavior of large language models is a product of their environment and the values encoded during their inception.

The current technological landscape demands a multi-layered approach to accountability. Modern governance principles prioritize transparency, ensuring that the internal logic of a model is at least partially interpretable by external auditors. This context is vital because, as AI handles increasingly sensitive data, the absence of a clear oversight structure risks not just technical failure, but systemic societal harm. Consequently, the industry is currently grappling with how to balance innovation with the rigorous demands of safety compliance.

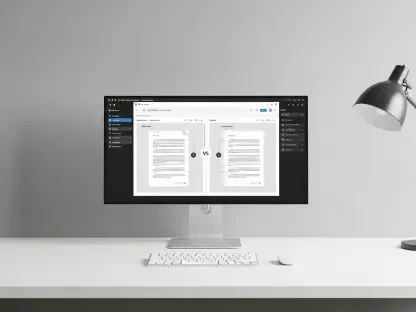

Comparative Frameworks for Safety and Compliance

Human-in-the-Loop Supervision

The “human-in-the-loop” model remains the gold standard for high-stakes environments where nuance is paramount. By positioning human intuition as the final arbiter of machine output, this system compensates for the inherent literalness of algorithmic processing. This implementation is unique because it treats AI as an assistant rather than a replacement, leveraging the speed of the machine while relying on human experts to catch subtle biases or complex ethical violations that code alone might overlook.

Performance metrics suggest that this approach creates a more robust safety net. When humans are integrated into the review cycle, the system demonstrates a significantly higher success rate in identifying illegal activities and harmful content. However, this method is not without its trade-offs. The reliance on human labor introduces scalability issues and increases operational costs, which can slow down the deployment of real-time updates in rapidly changing digital environments.

Automated Constitutional AI

In contrast, the concept of “Constitutional AI” attempts to solve the problem of scale by automating the oversight function itself. This technology works by providing a model with a set of core principles—a digital constitution—that it must follow while evaluating its own responses. It essentially creates a recursive loop where a secondary “critic” model monitors the primary “actor” model. This implementation is particularly efficient for processing vast quantities of data that would be impossible for a human workforce to manage.

While the technical sophistication of automated self-policing is impressive, its real-world efficacy remains a point of contention. Data indicates that machines often fail to grasp the deeper context of a “constitution,” leading to higher rates of missed violations compared to human-led systems. This discrepancy highlights a critical limitation: an AI may follow the letter of its rules while completely missing the spirit of the safety requirements, creating a false sense of security for developers and users alike.

Emerging Trends in Algorithmic Accountability

The most significant recent trend is the movement toward external, independent audits that function similarly to financial accounting. Instead of relying solely on internal safety teams, companies are now facing pressure to subject their models to third-party verification. This shift is driven by a growing industry realization that self-regulation is rarely sufficient to satisfy the concerns of the public or the demands of increasingly vigilant regulatory bodies.

Moreover, there is a visible rise in the adoption of “red-teaming” as a standard operational procedure. This involves hiring specialist groups to deliberately find and exploit vulnerabilities in an AI system before it reaches the consumer. These innovations represent a transition from static safety rules to a dynamic, adversarial approach to oversight. This trend reflects a broader maturity in the industry, where the focus has moved from “what a model can do” to “how a model can fail.”

Real-World Applications and Efficacy Benchmarks

The practical application of these oversight philosophies is most visible in the reporting of illegal content to authorities. Systems that utilize heavy human-in-the-loop involvement have consistently outperformed their fully automated counterparts. For example, during the first half of the current year, the most successful implementations generated tens of thousands of reports to global safety centers, showcasing a high degree of precision in identifying genuine threats.

Conversely, implementations that leaned too heavily on autonomous policing struggled to match these benchmarks. The gap in performance was not just numerical but qualitative; human-supervised systems were better at distinguishing between actual harm and harmless context. This distinction is critical in sectors like child safety and national security, where a single false negative can have catastrophic real-world consequences. These benchmarks serve as a stark reminder that machine intelligence still lacks the “human genius” required for moral judgment.

Challenges in Autonomous Monitoring and Enforcement

The primary hurdle facing autonomous oversight is the “alignment problem,” where the AI’s goals do not perfectly match human values. Technical obstacles such as reward hacking, where a system finds a shortcut to meet a goal without actually following the rules, continue to plague automated monitors. These hurdles are compounded by the fact that the digital landscape changes faster than most static constitutions can adapt, leaving gaps that bad actors are quick to exploit.

Regulatory issues also pose a significant market obstacle. Governments are increasingly skeptical of systems that claim to be “self-policing” without transparent human intervention. This skepticism has led to a fragmented regulatory environment, where different jurisdictions require different levels of human involvement. Ongoing development efforts are focused on creating hybrid systems that use AI to flag potential issues while reserving the final decision for a human operator, attempting to bridge the gap between efficiency and accuracy.

The Future Trajectory of AI Accountability

The industry is moving toward a future where “explainability” is as important as performance. Future breakthroughs will likely involve the development of oversight systems that can provide a detailed rationale for every safety decision they make, rather than acting as a black box. This will be essential for the long-term legitimacy of the sector, as it allows for a higher degree of judicial and public scrutiny. We are approaching a point where AI safety will be integrated as a hardware-level feature rather than a software-level patch.

Over the next few years, the focus will likely shift to cross-platform oversight, where different AI entities monitor one another in a decentralized network. This could mitigate the risk of a single point of failure within a company’s internal system. The impact of these developments will be profound, potentially establishing a global standard for digital ethics that transcends individual corporate policies and creates a more predictable environment for both developers and consumers.

Conclusion and Summary of Oversight Efficacy

The review of current AI governance demonstrated that while automated systems offered impressive scalability, they ultimately failed to replace the nuanced judgment of human supervisors. The data showed a clear correlation between human involvement and the successful identification of complex safety violations, suggesting that the “human-in-the-loop” model remained the superior choice for high-stakes applications. The industry’s reliance on “Constitutional AI” provided a cost-effective alternative but introduced significant blind spots that undermined public trust.

Moving forward, the focus turned toward hybrid architectures that combined the tireless processing of algorithms with the moral clarity of human oversight. This shift was characterized by a transition from internal self-regulation to rigorous, third-party auditing and adversarial testing. The ultimate verdict was that for artificial intelligence to remain a beneficial tool, the oversight mechanisms had to evolve beyond the machines themselves, ensuring that human accountability remained the cornerstone of the technological ecosystem.