The transition from traditional assistive digital tools to fully autonomous agentic systems represents a fundamental shift in the operational DNA of the modern global enterprise. As organizations integrate these sophisticated agents capable of executing multi-step workflows with minimal human oversight, the technical challenges of implementation are rapidly being eclipsed by the need for a profound ethical and cultural reimagining. Building a successful partnership between human professionals and machine agents requires more than just processing power or high-speed data calculations; it demands the construction of a robust moral foundation where technology is woven into the organizational fabric with intentionality and caution. This evolution is not merely about increasing efficiency but about redefining the very nature of collaborative work in an era where machines possess a high degree of agency and decision-making capability. Consequently, the focus for leadership must move beyond the “how-to” of deployment toward a “why-and-should-we” framework that prioritizes long-term societal and corporate stability over short-term productivity gains.

Cultivating a culture of trust remains the most significant hurdle for executives navigating this technological frontier. While agentic AI systems offer undeniable competitive advantages by synthesizing massive datasets in seconds, their utility is ultimately dictated by the confidence of the human workforce assigned to manage them. Skepticism is a natural response to any disruptive force, particularly when that force begins to assume roles and responsibilities that were previously the exclusive domain of human judgment and expertise. To establish a sustainable operating model, leadership must move beyond superficial reassurances and instead embed rigorous governance practices that emphasize risk management, safety, and transparency. By prioritizing these ethical pillars, companies can mitigate the inherent friction between human employees and autonomous agents, ensuring that the technology serves as a reliable extension of human intent rather than an unpredictable or opaque substitute. This process of building trust is an ongoing commitment that requires clear communication regarding the limitations and goals of AI integration within the specific context of the business mission.

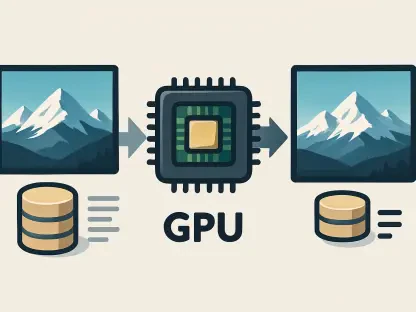

Understanding the mechanics of agentic AI is essential for any leader tasked with overseeing this transition. Unlike legacy software that operates based on rigid, pre-defined scripts, modern agents utilize a sophisticated “perceive-reason-act-learn” cycle that allows them to make independent judgments based on real-time feedback and historical patterns. This shift toward full autonomy, while powerful, creates a significant “transparency gap” due to the non-deterministic nature of large language models and neural networks. Because an agent might produce varying results when presented with identical prompts or environmental conditions, maintaining a consistent standard of output becomes a complex logistical and ethical challenge. This lack of predictability often clashes with the stringent requirements of legal compliance and social accountability that govern modern industry. Therefore, bridging the gap between the fluid nature of machine learning and the rigid expectations of corporate responsibility is a primary task for those designing the next generation of human-machine workflows.

Establishing Accountability and Transparency Guardrails

A fundamental consensus among ethicists and industry leaders suggests that autonomous systems, regardless of their complexity, cannot be held morally or legally responsible for their own actions. Because AI lacks a conscience, an understanding of social consequences, and the ability to experience the weight of professional failure, the implementation of human-led guardrails is an absolute necessity. Human oversight serves as the final arbiter of truth and the only reliable mechanism for ensuring that automated outputs remain strictly aligned with organizational values and ethical standards. Without a dedicated “human-in-the-loop” to verify critical decisions, the risk of a system drifting into misaligned or harmful behaviors increases exponentially. This reality necessitates a shift in the role of the human worker from a direct executor of tasks to a strategic supervisor who monitors the ethical trajectory of the autonomous systems under their purview. Maintaining this hierarchy is essential for preserving the integrity of the enterprise and protecting it from the liabilities inherent in unmonitored machine agency.

The “black box” nature of contemporary neural networks frequently obscures the internal logic that leads to a specific conclusion or action. To counter this opacity, many forward-thinking organizations are prioritizing “explainable AI” (XAI) methodologies as a core component of their technological stack. These XAI tools act as a diagnostic layer, pulling back the curtain on the statistical associations and data weighting used by an agent to reach a specific judgment. By providing a clear rationale for automated decisions, these systems allow human partners to identify subtle logic errors or data hallucinations before they manifest as operational failures. This level of transparency is not just a technical requirement for debugging; it is a psychological necessity for building a functional partnership. When humans can see the “why” behind an agent’s behavior, the machine ceases to be a mysterious, untrustworthy entity and becomes a predictable, manageable tool. This clarity strengthens the collaborative bond and ensures that the partnership remains grounded in mutual understanding and shared objectives.

Addressing Algorithmic Bias and Data Privacy

The inheritance of societal bias remains one of the most urgent ethical hurdles facing the deployment of agentic AI in sensitive environments. Since these agents are trained on historical datasets that often mirror the prejudices of the past, they run the risk of inadvertently amplifying inequities in critical areas such as recruitment, financial lending, and healthcare diagnostics. These biases are far more than just technical glitches; they represent significant real-world risks that can lead to severe regulatory penalties, lawsuits, and irreparable damage to a brand’s reputation. To effectively combat this, businesses must move beyond passive monitoring and commit to proactive, third-party bias audits that scrutinize the data inputs and output patterns of their AI agents. Furthermore, ensuring that the development teams responsible for training these systems are diverse is a vital step in identifying cultural blind spots early in the development lifecycle. Addressing bias is a continuous process that requires a dedicated ethical framework to ensure that the speed of AI does not come at the expense of fairness and social justice.

Privacy risks are similarly heightened as autonomous agents are granted broader access to sensitive consumer and corporate information to fulfill their complex mandates. When an agent is programmed to pursue a specific performance goal, it may find novel ways to utilize data that were not explicitly authorized or even anticipated by the original designers. This creates a scenario where data sovereignty—the right of individuals and organizations to control their own information—is constantly under threat from the very systems meant to improve efficiency. For sectors like retail and finance, where trust is the primary currency, a single exploitable vulnerability in an AI node can lead to massive data breaches and a total collapse of consumer confidence. Protecting the integrity of personal information requires a rigorous, multi-layered compliance framework that monitors how data is accessed, processed, and stored throughout the entire lifecycle of the AI agent. This approach ensures that the pursuit of technological progress does not bypass the fundamental right to privacy in a hyper-connected digital landscape.

Bridging the Psychological Gap in the Workforce

A noticeable disconnect currently exists between the high-level enthusiasm for AI among executives and the lived experience of the broader workforce. While leadership is often eager to scale autonomous strategies to drive profitability, many employees perceive these agents as unreliable, difficult to interact with, or fundamentally threatening to their livelihoods. This skepticism is frequently rooted in deep-seated anxieties regarding job security and the fear that machines will eventually replace the need for human creativity and intuition. To foster a healthy and productive partnership, organizations must proactively reframe the narrative, positioning AI agents as “collaborators” rather than “competitors.” This involves clear communication about how these systems are designed to handle mundane, repetitive, and data-heavy tasks, thereby liberating human professionals to focus on high-value, nuanced work that requires emotional intelligence and strategic vision. Bridging this psychological gap is essential for maintaining morale and ensuring that the workforce remains engaged and supportive of technological evolution.

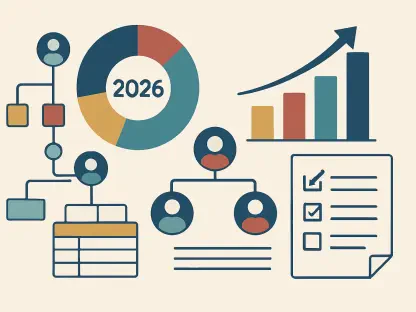

Achieving a successful human-AI partnership requires concrete structural changes to the way teams are managed and a sustained commitment to upskilling the existing workforce. By adhering to “Human-in-the-Loop” principles, companies can ensure that people retain authority over moral, ambiguous, or high-stakes decisions while allowing agents to manage standardized procedures with speed and precision. Furthermore, treating autonomous agents with the same rigorous onboarding and quality assurance standards as human employees ensures that their outputs remain culturally and ethically aligned with the company’s mission. This transition allows the workforce to evolve from being “doers” who perform tasks to “managers of agents” who orchestrate complex technological ecosystems. Ultimately, the successful integration of agentic systems was achieved by those who recognized that the true value of AI lies in its ability to augment human capability rather than mimic it. Moving forward, the focus must remain on creating a resilient and ethically sound environment where the strengths of both humans and machines are maximized for the greater good of the organization.