The digital architecture of the modern corporation currently faces a reckoning as autonomous agentic workflows begin to execute high-stakes decisions based on data that often lacks the basic rigor of human verification. While the previous few years saw a frantic rush toward experimental AI pilots, the current landscape demands a shift from novelty to reliability. The sheer volume of unstructured data—comprising text, video, and audio—has expanded by four times, overwhelming the manual oversight mechanisms that once served as the primary defense against misinformation. Consequently, enterprises find themselves at a crossroads: either they master the art of automated, federated governance or they risk the catastrophic fallout of a $10 million data breach or a multi-billion euro fine triggered by a single governed-less algorithm.

The paradox of the current fiscal year lies in the massive increase in IT spending contrasted against a persistent trust deficit regarding corporate information. Decision-makers are increasingly wary of the outputs provided by their own systems, fearing that “garbage in” has evolved into a sophisticated, automated “garbage out” cycle that could undermine strategic integrity. As AI moves from being a consultative tool to an active participant in business operations, the necessity for a robust governance framework has become the difference between competitive dominance and institutional irrelevance.

The High Cost of the “Garbage In, Garbage Out” Reality in the Age of AI

The transition from isolated experiments to integrated agentic workflows has stripped away the luxury of human-led data cleansing. In an environment where AI agents process thousands of transactions per second, any flaw in the underlying data is amplified at a scale that traditional IT teams cannot hope to contain. This acceleration has fundamentally broken the old “garbage in, garbage out” paradigm, replacing it with a reality where flawed data results in autonomous errors that can propagate through an entire supply chain before a human even identifies the anomaly. The financial implications are no longer theoretical; they are reflected in the skyrocketing insurance premiums and legal reserves allocated for AI-related liabilities.

Traditional manual oversight is failing not just because of speed, but because of the unprecedented growth of unstructured information. Since 80% of enterprise data now lives in formats like video recordings or internal chat logs, the rigid structures of the past are insufficient for the current demand. IT departments are spending more than ever, yet the quality of insights remains stagnant because the governance tools have not kept pace with the complexity of synthetic and human-generated content. A $10 million data breach is no longer a worst-case scenario; it is a mathematical probability for organizations that allow their AI to operate in a vacuum of accountability.

The persistent trust deficit within the modern enterprise stems from this lack of verifiable data lineage. If a business unit cannot prove where a specific data point originated or how it was transformed, the resulting AI model is effectively a black box. This lack of transparency leads to internal friction, as departmental leaders hesitate to rely on centralized analytics for their most critical KPIs. The challenge for the contemporary board of directors is to reconcile the mandate for AI-driven efficiency with the absolute requirement for data integrity and regulatory compliance.

Why Traditional Centralized Management Is Obsolete for Modern Data Ecosystems

Rigid, top-down data silos are collapsing under the weight of decentralized business units that require immediate access to specialized information. The old model of a central “data warehouse” team acting as a gatekeeper for every query has become a bottleneck that stifles innovation and forces departments to create “shadow IT” solutions. These fragmented practices are the primary drivers of AI hallucinations, where models draw from conflicting sources to produce insights that are technically plausible but factually incorrect. In a global economy, the delay caused by centralized management is as damaging as the inaccuracies it seeks to prevent.

The modern imperative has shifted toward a “zero-trust” framework for data, requiring real-time authentication of both human and synthetic assets. As AI agents increasingly generate their own datasets, the distinction between original and derivative work blurs, creating a nightmare for compliance officers. A centralized team sitting in a different time zone cannot possibly understand the nuance of a localized marketing dataset or a specific engineering log. This disconnect necessitates a move toward a model that recognizes the sovereignty of business units while enforcing a common language for security and quality.

Managing the complexity of text, video, and audio requires a sophisticated understanding of context that centralized models lack. These assets comprise the vast majority of enterprise information, yet they are often the least governed. When fragmented data practices take hold, the resulting AI models often reflect the biases and errors of the most disorganized departments. Moving toward a more agile, distributed approach allows the organization to authenticate data at the source, ensuring that the information used to train strategic models is as fresh and accurate as possible.

The Federated Blueprint: Balancing Local Agility with Global Standards

The shift toward federated governance begins with a fundamental change in organizational leadership, moving AI oversight from a hidden IT task to a prominent C-suite mandate. This transition requires the appointment of an AI governance leader who can harmonize the often-conflicting priorities of innovation, risk management, and legal compliance. By establishing a cross-functional steering committee, enterprises can resolve departmental data conflicts before they reach the production stage. This committee serves as the judicial branch of the data ecosystem, ensuring that every business unit operates under the same high-level policies while retaining the freedom to innovate.

Operationalizing this vision requires the empowerment of distributed data stewards who are embedded directly within the business units they serve. These individuals act as the bridge between technical data definitions and business-ready insights, translating corporate policy into specific, actionable workflows. By maintaining quality at the source, data stewards prevent the “pollution” of the broader ecosystem, ensuring that any data entering the lakehouse is already certified and categorized. This distributed accountability ensures that the people who understand the data best are the ones responsible for its integrity.

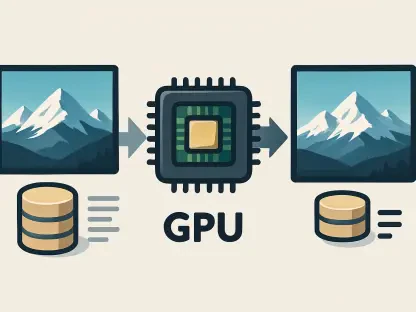

The architectural foundation of this federated model is the data lakehouse, which functions as a single source of truth for both analytical and AI tasks. By eliminating redundant copies of data across the organization, the lakehouse architecture reduces the risk of fragmentation and lowers storage costs. Crucially, the use of active metadata acts as a real-time control plane, providing the organization with a dashboard that monitors data health across all regions. When a metadata alert signals a quality drop, the system can automatically pause AI workflows, preventing the spread of flawed information.

Industry Consensus: The Critical Link Between Data Readiness and ROI

Expert findings suggest a stark divide between organizations that prioritize governance and those that treat it as a secondary concern. Current research indicates that 60% of organizations will fail to realize any significant value from their AI investments by the end of next year if they do not implement a cohesive governance program. The shift toward conversational analytics, where natural language queries replace traditional dashboards, has made metadata accuracy the primary determinant of success. If the underlying data structure is flawed, the natural language query will return a misleading answer, potentially leading to disastrous strategic shifts.

Data trust levels vary wildly depending on the maturity of the governance program. Organizations with formal federated models report a 71% trust level in their internal data, compared to a mere 50% in companies that still rely on ad hoc management. This trust gap has direct implications for the speed of AI adoption; teams that trust their data are more likely to deploy autonomous agents in customer-facing roles. Conversely, organizations without governance remain stuck in a cycle of endless “proof of concept” trials, unable to move into full production due to lingering fears of inaccuracy.

The legal landscape is also providing a powerful incentive for reform, with the EU AI Act and GDPR setting a global standard for financial penalties. The escalating consequences of non-compliance mean that a single lapse in data governance can result in fines that erase an entire year of profit. Case study observations have shown that the most resilient companies are those that view compliance not as a hurdle, but as a framework for building superior data products. By aligning governance with international regulations, these enterprises create a “safe harbor” for their AI initiatives to grow.

Strategic Steps for Implementing a Scalable Governance Framework

Successfully implementing a scalable governance framework began with the definition of a clear mission statement that aligned AI goals with the broader business strategy. This alignment ensured that every data initiative contributed directly to the bottom line rather than existing as a vanity project. Enterprises that thrived invested heavily in wide-scale AI fluency training, fostering a culture where every employee felt responsible for the quality of the data they handled. This cultural shift proved to be just as important as the technical implementation of the data lakehouse itself.

The automation of routine tasks, such as schema mapping and data labeling, allowed strategic talent to focus on higher-level problem-solving rather than manual entry. By implementing a platform-agnostic control plane, organizations maintained a consistent security posture across hybrid and multi-cloud environments. This consistency was vital for navigating the evolving global regulatory landscape, providing the auditable reporting structures required by government agencies. Leaders who prioritized these strategic steps found that their AI systems were not only more accurate but also more adaptable to changing market conditions.

The final phase of the transition involved the establishment of rigorous reporting structures that offered transparency to both internal stakeholders and external regulators. This commitment to accountability transformed data from a liability into a competitive advantage. Organizations that mastered this transition moved away from the fear of AI-driven errors and toward a future of autonomous excellence. The journey toward federated governance was characterized by a move from centralized control to a model of shared responsibility, where the integrity of information became the primary pillar of corporate success.