Modern software architectures have reached a level of complexity where traditional security scanners often struggle to distinguish between a genuine threat and a harmless line of code. For decades, the industry relied on Static Application Security Testing (SAST) to map data flows from untrusted inputs to sensitive outputs. However, the emergence of AI-driven reasoning marks a departure from this “source-to-sink” model, favoring a system that understands the logical intent of a developer. This review examines how this shift toward cognitive analysis is fundamentally changing the way we secure digital infrastructure.

The Evolution of Vulnerability Detection: From Legacy SAST to AI Reasoning

The transition from pattern matching to contextual understanding represents a major paradigm shift in application security. Traditional tools function primarily as advanced grep engines, searching for known-bad signatures or simple misconfigurations. While effective for catching low-hanging fruit, they are notoriously blind to subtle logic errors. AI-driven analysis, by contrast, replicates the cognitive processes of a human researcher, assessing how different components of a system interact rather than looking at code in a vacuum.

This evolution was accelerated by the realization that calling a “sanitizer” function does not inherently make a program safe. Modern systems now prioritize architectural context over isolated fragments. OpenAI’s Codex Security, for instance, emerged as a response to the inherent noise of legacy methodologies. By focusing on the “why” behind the code, these tools can identify where validation occurs in the wrong order or where a trust boundary has been incorrectly defined, moving beyond the binary “safe or unsafe” labels of the past.

Core Methodologies of AI-Driven Analysis

Full Contextual Analysis and Architectural Mapping

AI systems today evaluate an entire repository’s architecture to establish a baseline of trust boundaries. This holistic view allows the engine to ignore misleading comments or outdated documentation that might confuse a human auditor. Instead of merely tracking a variable, the AI maps out the entire execution path, identifying how an application handles state across multiple modules. This capability is crucial for finding flaws that only manifest when disparate parts of a codebase interact in unexpected ways.

Constraint Reasoning and Micro-fuzzing

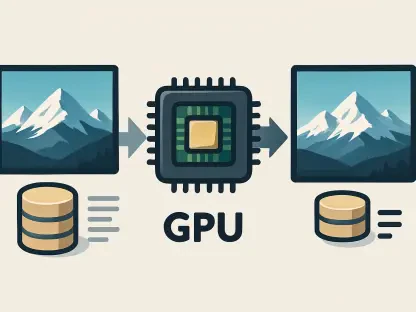

Technical sophistication is further enhanced through the use of formal verification tools like the z3-solver. By applying mathematical constraint reasoning, AI can calculate the exact conditions required for an integer overflow or a buffer overrun to occur. To supplement this theoretical approach, many platforms employ micro-fuzzing. This involves isolating specific code slices and subjecting them to real-time data transformations in a controlled environment. This dual approach ensures that the analysis is grounded in mathematical reality rather than just probabilistic guessing.

Sandboxed Execution and Exploit Verification

One of the most significant breakthroughs in recent years is the transition from identifying potential risks to generating automated proof-of-concept (PoC) exploits. By executing suspected vulnerable code in a secure sandbox, the AI can verify if a flaw is “reachable” and exploitable in a production setting. This verification step drastically reduces the burden on security teams by filtering out non-exploitable code patterns that would otherwise clutter a vulnerability report with false positives.

Current Industry Trends and the Competitive Landscape

The market for AI-enhanced security is experiencing explosive growth, with projections suggesting it will exceed $1.5$ billion by 2030. As organizations move toward 2027 and 2028, the demand for high-fidelity tools has created a fierce competitive environment. Newer platforms like Theori’s Xint Code are challenging established players by offering deeper integration into the development lifecycle. This competition is driving a shift away from hybrid systems, as experts argue that seeding AI with old SAST data creates “premature narrowing” and prevents the discovery of novel bug classes.

Real-World Applications and Implementation

The practical value of AI reasoning was highlighted by the discovery of CVE-2024-29041, a logic bypass in Express.js. Traditional scanners missed the flaw because validation occurred before URL decoding, a nuance that required an understanding of execution order. Such capabilities are now essential in sectors like fintech and cloud infrastructure, where complex authorization gaps and workflow bypasses can lead to catastrophic data breaches. Implementing these tools allows developers to catch architectural flaws before they are ever committed to a main branch.

Technical Challenges and Strategic Limitations

Despite its prowess, AI-driven analysis carries a significant computational overhead. The “black box” nature of neural reasoning can also make it difficult for developers to understand why a specific pattern was flagged compared to the deterministic output of a standard linter. Furthermore, integrating these heavy-duty tools into rapid CI/CD pipelines remains a challenge. There is a persistent risk of over-reliance on AI, where teams might neglect basic coding standards in the hope that an automated system will catch every mistake during the final audit.

Future Outlook and the Path Toward Autonomous Security

The industry is moving toward self-healing code ecosystems where AI not only finds a bug but also proposes and tests a verified patch. As behavioral understanding becomes the new baseline, the bug bounty economy may shift away from simple script-kiddie discoveries toward complex, multi-stage exploit chains that only advanced reasoning can uncover. Long-term, the goal is a fully autonomous security layer that evolves alongside the software it protects, reducing the window of exposure from days to seconds.

Final Assessment: A Necessity for Modern Software

The transition from legacy static analysis to deep, AI-driven constraint reasoning proved to be a necessary response to the skyrocketing complexity of modern applications. High-fidelity, low-noise analysis transformed security from a bottleneck into an integrated component of the development process. Organizations that adopted these reasoning-based tools early gained a distinct advantage in identifying subtle logic flaws that traditional scanners consistently overlooked. Moving forward, the focus will likely shift toward perfecting the integration of these autonomous systems into every layer of the global digital infrastructure, ensuring that security keeps pace with innovation.