The arrival of the GitHub Copilot SDK signals a definitive end to the period when artificial intelligence served merely as a reactive text generator within a coding window. This release represents a fundamental shift in how developers interact with large language models, moving away from simple autocomplete functions toward deeply integrated, autonomous agents that can navigate complex software environments with minimal human intervention.

Evaluating the Strategic Value of Agentic AI Integration

Shifting from Reactive Chat to Autonomous Workflows

The primary value proposition of this new framework lies in its departure from the chat-based interface. Instead of waiting for a user to prompt a change, the SDK allows for the creation of agents that proactively manage tasks. These agents can analyze a codebase, identify necessary updates, and execute changes across multiple files without the need for constant back-and-forth dialogue. This transition reduces the cognitive load on developers, allowing them to focus on high-level architecture while the AI handles the mechanical aspects of implementation.

By embedding these capabilities directly into the application logic, businesses can build tools that understand the context of a project far better than a standard LLM. This evolution suggests a future where software is no longer a static collection of features but a living system capable of maintaining itself. The strategic advantage here is not just speed, but the ability to automate multi-step processes that previously required human oversight to ensure consistency.

Addressing the Challenges of Fragmented AI Infrastructure

Before the introduction of this SDK, developers often struggled with a fragmented ecosystem of AI tools and proprietary APIs. Maintaining custom infrastructure to handle prompt engineering, state management, and external tool connections was both time-consuming and prone to errors. GitHub addresses this pain point by providing a unified layer that standardizes how AI agents interact with the developer’s environment, effectively lowering the barrier to entry for advanced automation.

Furthermore, this centralized approach mitigates the security and reliability risks associated with “home-grown” AI integrations. By utilizing a standard protocol, teams can ensure that their AI workflows are predictable and easier to debug. This consolidation allows organizations to scale their AI efforts without reinventing the wheel for every new project, creating a more cohesive development lifecycle across the enterprise.

Technical Overview of the Copilot SDK and Its Core Capabilities

The Transition to Agentic Execution

The core innovation of the SDK is its focus on agentic execution, which empowers the AI to act as an independent operator within a defined scope. Unlike traditional integrations that only offer suggestions, these agents can plan complex operations and carry them out by invoking specific tools. This means an agent can look at a bug report, search the relevant files, write a fix, and then verify the results using a test suite, all within a single orchestrated workflow.

This capability is supported by a sophisticated internal logic that manages the sequence of operations. The SDK handles the heavy lifting of determining which tool to use and when to use it, based on the goals defined by the developer. This shift toward autonomy is what differentiates the current generation of software development kits from the simple API wrappers of the recent past.

Multi-Language Support and the Model Context Protocol (MCP)

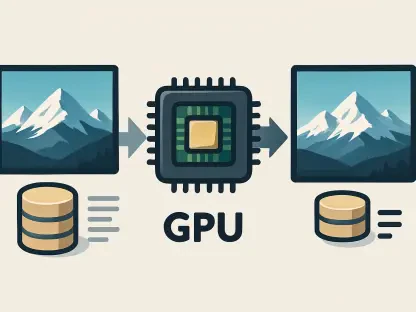

Technical versatility is a hallmark of this release, as the SDK offers robust support for major programming languages such as Node.js, Python, Go, and .NET. This broad compatibility ensures that diverse engineering teams can adopt the framework without being forced to rewrite their existing stacks. The inclusion of the Model Context Protocol (MCP) is particularly noteworthy, as it provides a standardized way for agents to access structured data like API schemas and file systems.

By leveraging MCP, the SDK avoids the pitfalls of oversized, “noisy” prompts that often lead to hallucinations or lost context. Instead, the AI receives precise, relevant data about the runtime environment, which significantly improves the accuracy of its actions. This protocol acts as a bridge between the abstract reasoning of the model and the concrete reality of the code, making the integration much more reliable for production use.

Key Components: Orchestration, State Management, and Tool Invocation

Effective AI agents require more than just a connection to a model; they need a way to track progress and interact with the world. The SDK provides built-in orchestration and state management components that keep the agent on track during long-running tasks. This ensures that if a process is interrupted or encounters an error, the agent knows exactly where it left off and what steps are needed to recover.

Tool invocation is handled through a programmable layer that allows developers to define exactly what an agent is allowed to do. Whether it is reading a database, calling an external API, or modifying a configuration file, these actions are governed by strict definitions. This granular control is essential for building trust in autonomous systems, as it prevents the AI from performing unauthorized or destructive actions within the codebase.

Assessment of Operational Performance and Implementation Patterns

Real-World Implementation Models

Delegating Complex Operational Workflows

One of the most effective ways to use the SDK is through the delegation of repetitive operational tasks, such as preparing a major software release. In this scenario, an agent can be tasked with updating version numbers, generating changelogs from commit history, and ensuring that all dependencies are correctly pinned. This reduces the risk of human error in the “boring but critical” parts of the development cycle.

Moreover, these agents can be programmed to monitor the health of a deployment and automatically roll back changes if specific performance metrics are not met. This level of operational autonomy allows small teams to manage complex infrastructure that would otherwise require a dedicated DevOps engineer. The focus here is on augmenting the developer’s reach, enabling them to oversee vast systems with minimal manual effort.

Grounding Actions in Live System Data

The SDK excels when agents are grounded in real-time data rather than static snapshots of a codebase. By connecting agents to live system telemetry or active issue trackers, they can provide solutions that are relevant to the current state of the application. For instance, an agent could analyze a spike in error rates and immediately suggest a patch based on the specific logs generated by the production environment.

This grounding ensures that the AI’s suggestions are not just syntactically correct, but contextually appropriate for the problem at hand. It transforms the AI from a general-purpose coding assistant into a specialized tool that understands the unique quirks of a specific project. This precision is vital for maintaining high availability in modern, fast-moving software environments.

Extending AI Capabilities Beyond the IDE

While many AI tools are confined to the text editor, the Copilot SDK enables the integration of agentic capabilities into background services and desktop applications. This means that AI logic can be embedded into custom internal tools or even consumer-facing software. For example, a data analysis application could use the SDK to allow users to perform complex data transformations through natural language commands that the agent then executes.

This extension beyond the IDE opens up new possibilities for software design, where the interface becomes a conversation between the user and an intelligent agent. By moving the AI closer to the end-user, developers can create more intuitive and powerful applications. The SDK serves as the foundation for this new class of software, providing the necessary plumbing to make these interactions seamless.

Evaluation of Autonomous Error Recovery and Planning Accuracy

The performance of the SDK in terms of error recovery is surprisingly resilient, though it is not yet perfect. When an agent encounters an unexpected result from a tool call, it often attempts to diagnose the issue and adjust its plan accordingly. This self-correcting behavior is a significant improvement over previous generations of AI integrations, which would typically fail silently or repeat the same mistake.

Planning accuracy remains a variable factor that depends heavily on the complexity of the task and the quality of the provided context. While the SDK does an excellent job of breaking down medium-sized tasks, very large, architectural changes can still result in logic gaps. Developers must still provide clear boundaries and high-quality “grounding” data to ensure the agent remains productive and does not deviate into inefficient or incorrect paths.

Advantages and Limitations of the SDK Framework

Key Strengths of a Unified AI Development Layer

The most significant advantage of the SDK is the reduction in “glue code” required to build sophisticated AI features. By handling the complexities of model communication and state maintenance, the framework allows developers to focus on the unique logic of their applications. This standardization also means that improvements to the underlying Copilot models are automatically reflected in the SDK, providing a future-proof foundation for AI development.

Another strength is the deep integration with the existing GitHub ecosystem, which provides a familiar environment for millions of developers. This tight integration ensures that security policies and access controls are consistent with the rest of the development pipeline. The result is a professional-grade tool that feels like a natural extension of the modern developer’s toolkit rather than a bolted-on afterthought.

Technical Constraints and Production Readiness Concerns

Despite its strengths, the SDK is currently in a technical preview phase, which brings inherent risks. It may not yet be suitable for mission-critical systems where absolute predictability is required, as the underlying models can still exhibit non-deterministic behavior. Furthermore, the reliance on a Copilot subscription may be a hurdle for some organizations, particularly those with strict budget constraints or specialized licensing requirements.

There are also concerns regarding the latency of complex agentic workflows. Because these agents often perform multiple round-trips to the model and execute various tools, the total time to complete a task can be significant. For real-time applications, this delay might be unacceptable, requiring developers to carefully choose which tasks are suitable for agentic delegation and which still require traditional, faster automation.

Summary of Findings and Final Assessment

Comparing the SDK to Traditional Automation Methods

When compared to traditional automation, the GitHub Copilot SDK offers a level of flexibility that hard-coded scripts simply cannot match. Traditional methods are brittle and fail when they encounter scenarios not explicitly defined by the programmer. In contrast, agentic AI can reason through ambiguity and adapt to changing conditions, making it much more robust for complex, evolving software projects.

However, this flexibility comes at the cost of transparency and control. A script will do exactly what it is told every time, whereas an agent might take slightly different paths to reach the same goal. For developers, the choice between the two depends on whether the task requires the rigid precision of code or the adaptive intelligence of a model. The SDK successfully bridges these two worlds, but it does not entirely replace the need for conventional logic.

Final Recommendation on Adoption and Investment

The final assessment of the GitHub Copilot SDK is that it is a transformative tool for any organization looking to move beyond basic AI assistance. It provides a structured, professional framework for building the next generation of intelligent software. While there are still hurdles to overcome in terms of production stability and performance, the potential for increased developer productivity and improved software quality is too significant to ignore.

For teams already invested in the GitHub ecosystem, early adoption is highly recommended. The learning curve is manageable, and the benefits of standardizing AI workflows will pay dividends as the technology matures. Even for those outside the ecosystem, the SDK represents a benchmark for what a modern AI development platform should look like, making it a critical piece of technology to watch.

Concluding Recommendations for Developers and Enterprises

Identifying Ideal Use Cases for Agentic AI

Organizations found the greatest success by targeting workflows that were high in volume but moderately complex. Tasks like automated refactoring, dependency management, and initial bug triaging proved to be the most effective areas for agentic intervention. By starting with these low-risk, high-reward scenarios, developers gained confidence in the system’s capabilities while minimizing the impact of potential errors. The technology performed best when it was treated as a powerful assistant rather than a total replacement for human judgment.

Future implementations should prioritize environments where the “cost of failure” was easily mitigated by automated testing or human review. The SDK thrived when integrated into CI/CD pipelines where every action taken by an agent could be verified before hitting production. This approach created a safety net that allowed the AI to operate at peak efficiency without introducing instability into the system. Moving forward, the most successful developers will be those who can effectively “prompt-engineer” the entire environment, not just the text.

Strategic Considerations for High-Stakes Environments

In high-stakes environments, such as financial services or healthcare, the adoption of the SDK required a more cautious approach to security and compliance. Enterprises discovered that while the AI could significantly speed up development, the need for strict audit trails remained paramount. Future updates to the SDK will likely need to focus on enhanced logging and explainability features to satisfy these rigorous requirements. Ensuring that every decision made by an agent was traceable became a top priority for decision-makers in these sectors.

Ultimately, the strategic move for enterprises involved a shift in hiring and training practices. Instead of focusing solely on syntax and manual coding, the emphasis moved toward managing and orchestrating AI agents. This new paradigm of “AI Orchestration” necessitated a deeper understanding of system architecture and data grounding. The SDK did not just change how code was written; it changed the very nature of what it meant to be a software engineer in an increasingly autonomous world.