When a marketing coordinator blames a missed campaign launch on a misaligned prompt or a developer shrugs off buggy code as a mere algorithmic hallucination, the traditional pillars of professional responsibility begin to tremble. This growing tendency to point toward the machine when things go wrong represents a significant shift in workplace dynamics. As generative artificial intelligence becomes an invisible partner in every department, from finance to human resources, the “AI alibi” is quickly becoming the most common excuse in the modern office. Employers now face the daunting task of determining where the machine’s limitation ends and the employee’s negligence begins.

The rapid adoption of these tools has created a paradoxical environment where efficiency is skyrocketing, but the sense of personal ownership over work product is in decline. When an automated system produces a biased report or a security flaw, the human operator often feels like a bystander to a process they initiated but did not fully control. However, for a business to function reliably, the final output must remain the sole responsibility of the individual behind the screen. Without a clear and enforceable standard of accountability, organizations risk a slow erosion of quality that can have devastating legal and reputational consequences.

The New Workplace Alibi: When “The AI Did It” Becomes a Standard Excuse

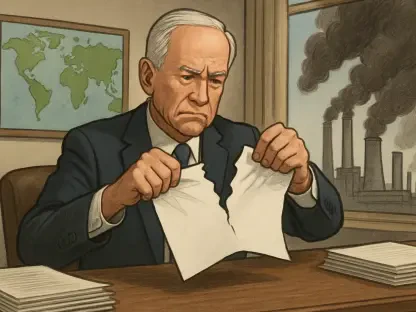

The modern cubicle has witnessed the birth of a peculiar phenomenon where the software itself is summoned to the manager’s office as the primary suspect for professional failure. In the past, a mistake was viewed as either a personal failing or a systemic breakdown, but today, “the AI did it” serves as a convenient catch-all for errors ranging from minor typos to significant data breaches. This trend reflects a broader psychological shift where employees view generative models not as sophisticated calculators, but as semi-autonomous entities capable of shouldering the burden of negligence. When a prompt fails to produce the expected outcome, the resulting silence or error is no longer seen as a failure of human oversight, but as an inherent quirk of the technology that the worker is supposedly powerless to correct.

This abdication of duty poses a direct threat to the integrity of corporate output, particularly as automation becomes inseparable from core business functions. If a project manager permits a biased summary to reach a client because the tool hallucinated a fact, the damage to the firm’s reputation is tangible, yet the internal defense often remains focused on the machine’s current limitations. Business leaders now confront a landscape where the boundary between a tool and a teammate has blurred so significantly that workers feel comfortable delegating their moral agency to a black-box algorithm. Without a firm intervention, this culture of blame risks turning high-performance environments into passive monitoring stations where quality control is treated as an optional afterthought rather than a core requirement.

Why Accountability Matters in the Total Integration of AI

The transition from AI as a futuristic novelty to a fundamental work tool happened with such velocity that the conceptual framework for individual responsibility struggled to keep pace. While the efficiency gains of automated drafting and data analysis are undeniable, the erosion of accountability creates a vacuum where organizational standards can quickly disintegrate. A workplace that allows its staff to disclaim responsibility for AI-generated errors is one that effectively lowers its barrier for excellence to the lowest common denominator of the software’s current version. For human resources executives, the challenge lies in reasserting that the human remains the ultimate master of the tool, regardless of how intelligent that tool appears to be.

Furthermore, the lack of a firm accountability structure introduces massive operational risks, particularly when automated tools handle sensitive or regulated information. If an employee treats an algorithm as a surrogate for judgment, the nuance required for ethical decision-making and strategic alignment is lost. Maintaining a culture of rigor requires that every individual understands that technology is a productivity multiplier, not a replacement for the discerning eye of a professional. By holding the line on accountability, companies ensure that their workforce remains engaged, critical, and ultimately responsible for the brand’s promise to its stakeholders. This engagement is what prevents a minor technical glitch from escalating into a full-scale corporate crisis.

The Intersection of Governance, Tool Selection, and Role-Based Authority

Effective oversight begins with a clear distinction between sanctioned enterprise tools and the “shadow AI” of public, open-source platforms. Many mistakes attributed to AI actually stem from employees using consumer-grade tools for enterprise-level tasks where data privacy and accuracy are not guaranteed. A robust governance program must define which tools are approved for specific tasks and which departments have the authority to use them. Using an algorithm to brainstorm a catchy headline for a blog post is vastly different from using a screening tool to evaluate job candidates or analyze employee performance metrics. Organizations must establish role-based authority to prevent the unauthorized use of high-risk tools in sensitive decision-making processes.

When an error occurs, the investigation should not focus solely on the algorithm’s output, but on whether the employee followed the established protocols for tool selection. Governance must be active and ongoing, involving stakeholders from legal, IT, and human resources to review new use cases and assess risk levels. If an employee uses an unapproved open-source model to process confidential client data, the subsequent leak is a failure of compliance rather than a technical malfunction. By providing clear guidelines on which tools are permissible for specific functions, employers can eliminate the ambiguity that often fuels the “AI did it” defense, ensuring that every team member knows the boundaries of their digital toolkit.

Legal Perspectives and Expert Insights on Algorithmic Liability

Labor and employment experts, including Jason Stavely and Meghan O’Connor from Quarles & Brady, emphasize that the legal landscape is shifting to close loopholes regarding algorithmic misuse. Current regulatory trends increasingly classify AI systems used in employment decisions—such as hiring, promotion, and termination—as high-risk applications. For instance, Colorado’s Artificial Intelligence Act requires employers to perform rigorous impact assessments and maintain strict human oversight for these systems. Legal experts argue that from a liability standpoint, AI is no different from any other office utility; the user remains legally responsible for the final result. If a biased algorithm leads to a discriminatory hiring practice, the company cannot point to the software as a legal shield.

Experts warn that unless “human-in-the-loop” protocols are strictly enforced, employers may find themselves defenseless in litigation involving confidentiality breaches or professional malpractice. The burden of proof is increasingly falling on the employer to demonstrate that they maintained adequate control over their automated systems. Attorneys suggest that companies should treat AI outputs with the same skepticism they would apply to a draft provided by a junior intern. Without a verified trail of human review, the use of AI can become a liability rather than an asset. As state and federal agencies increase their scrutiny of automated decision-making, the necessity for human validation becomes not just a matter of corporate policy, but a critical legal requirement for staying in business.

Strategies for Establishing an Accountability-First AI Culture

To prevent technology from becoming a scapegoat, HR leaders must move beyond static usage policies and toward active governance programs that include leadership buy-in and ongoing risk review. Practical steps include implementing mandatory training that focuses specifically on the limitations of AI, such as inherent bias and the tendency for hallucinations. Handbooks should explicitly state that the use of AI does not excuse errors, and “the AI did it” is not an acceptable defense for poor performance or ethical lapses. Employers should also maintain transparency regarding the monitoring of AI prompts and inputs, ensuring that employees understand their digital interactions are being audited for compliance and quality control.

By treating AI as a productivity enhancer rather than a decision-maker, companies can foster an environment where technology supports human judgment. This culture is sustained through consistent disciplinary practices that apply to AI misuse just as they would to any other form of misconduct. Regular audits of AI-generated work product can help identify patterns of over-reliance before they lead to significant errors. When workers know that their use of these tools is transparent and that they are the final signatories on every deliverable, the temptation to blame the machine disappears. Ultimately, the goal is to create a professional environment where innovation is encouraged, but the weight of responsibility remains firmly on the shoulders of the human workforce.

The leadership team established a comprehensive framework that successfully integrated advanced automation while preserving the core values of individual responsibility. They prioritized the development of a training module that shifted the focus from simple technical proficiency to ethical stewardship and manual verification. This curriculum emphasized that the human-in-the-loop was not a suggestion but a mandatory operational requirement for all high-risk tasks. Management formalized these expectations in the updated employee handbook, which provided a stable foundation for consistent discipline and quality assurance. By maintaining a clear boundary between experimental drafting and final client-facing deliverables, the organization protected its reputation and ensured that every employee remained an active participant in the creative process. The final result was a resilient corporate culture that utilized the speed of modern technology without sacrificing the integrity of human judgment.