Across dozens of interviews and practitioner debriefs, a pattern kept surfacing: the loudest voice still too often dictates the roadmap. Product managers described backlogs stuffed with executive pet projects; marketers bemoaned last‑minute campaign swaps; ops leaders cataloged initiative sprawl masked as “urgency.” The common fix was not more meetings or sharper persuasion. It was a shift toward template-driven prioritization that forces clarity and produces durable alignment.

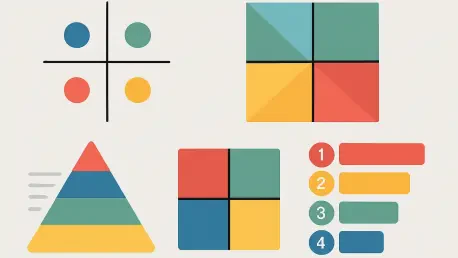

This roundup distills the most useful guidance from product leads, PMO heads, marketing strategists, agile coaches, and RevOps directors who have lived both sides of the chaos. Their perspectives converge on five templates—RICE, Eisenhower Matrix, Effort vs. Impact, MoSCoW, and Value vs. Complexity—and a playbook for embedding them. Where they diverge is just as instructive: some warn about quick‑win bias; others caution against data theater. Together, the insights form a practical map for choosing, calibrating, and sustaining a system that channels limited capacity toward the highest return.

Ground Rules From the Field: What Makes a Template Work

Seasoned operators stress that a template is only as strong as its definitions. “High impact” becomes contentious unless tied to explicit thresholds such as “revenue change above $10,000” or “NPS improvement of 5 points across 50% of users.” Teams that codify these thresholds before scoring avoid circular debates and reduce re‑litigation later. Several PMOs reported cycle‑time gains once they published a glossary for every scoring term and trained reviewers to use the same baseline.

Another shared lesson concerns intake and visibility. Centralizing requests in a single queue prevents shadow backlogs and reveals true load. Cross‑functional leaders recommend co‑scoring with stakeholders, not solitary math behind closed doors. This socialized scoring builds confidence in the process, even when someone’s favorite project ranks lower. In practice, the combination of objective criteria, transparent boards, and set review cadences reshapes behavior more reliably than top‑down mandates.

Template 1: RICE for Big Bets and Integrated Roadmaps

Product leaders and strategy directors favor RICE—Reach × Impact × Confidence ÷ Effort—when stakes are high, dependencies are thick, and budgets are visible. They credit RICE with reducing “initiative inflation” by anchoring conversation in quantifiable reach and defined impact ranges, then tempering rosy forecasts through explicit confidence scores. Portfolio managers reported 15–25% reductions in cycle time after standardizing Impact bands (for example, “>$10k net revenue” or “>2% retention lift”) and sharpening definitions for Reach cohorts.

However, RICE is not a silver bullet. Innovation teams argue the method can underweight transformative ideas with uncertain outcomes, creating a stealth bias toward incrementalism. Experienced practitioners address this by running short discovery spikes designed to raise Confidence before a final score, rather than treating low confidence as a death sentence. Leaders also warn against “effort sandbagging,” recommending peer review on estimates and post‑mortem audits comparing planned versus actuals to recalibrate scoring discipline.

Template 2: Eisenhower Matrix for Fast Triage and Focus

Support managers, incident commanders, and executive assistants consistently cite the Eisenhower Matrix as the lowest-friction triage tool. Urgent‑and‑important work lands in “Do First,” important‑but‑not‑urgent items get scheduled, urgent‑but‑not‑important tasks are delegated, and the remainder is cut. Teams handling inbound requests—IT help desks, social response crews, field service coordinators—lean on this grid to rescue their day from reactive ping‑pong.

Yet veterans caution against the trap of living only in Quadrant I. Leaders who pair Eisenhower with weekly “protect the important” blocks report steadier progress on foundational work. Practical tweaks include setting explicit maximums for “Do First” slots per shift and creating a “Parking Lot: Important Next” column to safeguard strategic tasks from drowning beneath time‑sensitive chatter. This pairing turns a blunt triage blade into a reliable knife that slices noise while saving substance.

Template 3: Effort vs. Impact for Sprint Planning and Quick Wins

Agile coaches and growth marketers endorse Effort vs. Impact as the workshop favorite. In a 2×2 grid, “High Impact/Low Effort” quick wins surface immediately, while “High Impact/High Effort” major projects shape upcoming sprints. This visual simplicity reduces analysis paralysis and kickstarts momentum, especially for teams attempting to reboot delivery confidence or validate new hypotheses quickly.

However, growth practitioners warn about becoming addicted to quick wins. Over‑optimizing for the top‑right quadrant can starve platform investments and architectural work that rarely shows immediate lift. Teams mitigate this by reserving a fixed capacity slice for “Major Projects” and tracking longitudinal value metrics apart from weekly KPIs. A common practice is to ring‑fence 30–40% of sprint points for high‑leverage infrastructure while still harvesting a few easy wins to keep velocity and morale high.

Template 4: MoSCoW for Scope Discipline Under Deadlines

Program managers in compliance‑heavy contexts—fintech, healthcare, regulated marketplaces—praise MoSCoW (Must, Should, Could, Won’t) for drawing hard lines without gutting quality. “Musts” lock down critical path elements like security controls, audit trails, and required localization; “Coulds” become the release valve when timelines compress. This shared vocabulary shortens negotiations with legal, risk, and external partners because everyone sees the same boundary map.

The dark side of MoSCoW appears when “Must” categories swell. Launch teams sometimes cram nonessential preferences into Must to avoid hard trade‑offs, clogging throughput. Experienced PMs recommend work‑in‑progress limits on Musts, testable acceptance criteria, and pre‑mortems that force teams to define the smallest lovable scope. When MoSCoW lives alongside dependency maps and SLA commitments, it functions as a shield rather than a crutch.

Template 5: Value vs. Complexity for Executive Portfolios

C‑suite leaders and transformation heads prefer Value vs. Complexity when balancing a portfolio that mixes quick wins with moonshots. Unlike Effort vs. Impact, Complexity in this model captures organizational drag—change management, integration debt, vendor lock‑in, data privacy hurdles, and procurement constraints. Executives interviewed highlight the importance of normalizing Complexity across regions to fairly compare, for example, a rollout in a market with strict data localization against one with looser rules.

A fresh twist is the rise of AI‑assisted scoring. Tools that scan project histories flag hidden dependencies, skill gaps, and regulatory patterns that often inflate Complexity after kickoff. Automation that re‑computes scores as conditions change challenges the misconception that prioritization is static. Leaders who adopt dynamic dashboards see fewer surprises and move resources before bottlenecks harden into delays.

Choosing Templates by Team Shape and Cadence

Operations chiefs emphasize matching methods to team size and data readiness. Small squads thrive on Eisenhower and Effort vs. Impact because they trade precision for flow. Cross‑functional groups benefit from RICE and MoSCoW, where the rigor absorbs friction between departments. At the portfolio tier, Value vs. Complexity clarifies trade‑offs between incremental revenue and transformational capability.

Timing matters as much as structure. In sprint‑based environments, lightweight grids keep grooming quick and decisions reversible. During quarterly planning, leaders have the runway to gather data and harmonize scores at scale. The most consistent performers shift fluently: simple tools to move, heavier tools to bet.

The Calibration Question: Turning Subjectivity Into Shared Math

Multiple sources insist that scoring only works when its ingredients are clear, comparable, and tested. That starts with definitions—what makes a 5 versus a 3 on Impact—and continues with evidence, like customer counts for Reach or analytics for conversion lift. Co‑scoring sessions reduce bias, and rotating “red team” reviews prevent teams from gaming the model unconsciously.

Post‑project audits close the loop. Comparing planned to actual Reach, Impact, and Effort exposes optimistic streaks and chronic underestimation. Teams that log scoring rationales and decision dates build a feedback library that sharpens future calls. Over time, the model reflects the organization’s real delivery curve, not its aspirational one.

Six Steps Practitioners Use to Make Any Template Stick

Leaders align on six moves regardless of framework. First, define scoring criteria in plain terms tied to measurable outcomes. Second, centralize intake so the backlog reflects reality. Third, score with data and cross‑functional participation, normalizing scales across disciplines. Fourth, map dependencies to avoid ranking blocked work above its prerequisite. Fifth, validate rankings with stakeholders who own outcomes. Sixth, set review rhythms—weekly for agile teams, monthly for departments, quarterly for executives—so the list stays current.

This cadence turns scoreboards into operating systems. Without it, even the best template devolves into a snapshot that ages badly. With it, teams gain a living process that adapts to fresh information without reopening foundational arguments every time a new request lands.

Where Opinions Diverge: Speed, Precision, and Innovation Risk

Practitioners split on how much precision is worth chasing. Some argue that elaborate scoring breeds false certainty and wastes time. Others counter that without structure, organizations default to politics. The middle ground that emerges in high performers is pragmatic: use the lightest method that resolves the decision at hand, escalate to heavier tools only when the bet or blast radius justifies the overhead.

Innovation advocates worry about templates penalizing ambiguity. Portfolio owners respond by funding discovery work explicitly, then resubmitting refined scores. This approach preserves space for breakthrough ideas while conserving resources by killing weak bets early. The upshot: it is not the template that smothers innovation, but the refusal to underwrite learning loops.

Real‑World Patterns by Function

Product leaders often run RICE for roadmap shaping, MoSCoW for release scope, and Effort vs. Impact within squads for sprint‑level choices. Marketing directors lean on Effort vs. Impact for campaign backlogs and use Value vs. Complexity when considering channel expansions or brand refreshes that carry change‑management weight. Operations heads favor Eisenhower for daily stability work, MoSCoW for cutover plans, and Value vs. Complexity to pace large process overhauls.

What unifies these patterns is a tiered toolkit. Each function picks one or two default templates and a fallback for exceptional cases. That consistency keeps cross‑team negotiations grounded while preserving flexibility for edge scenarios.

Culture Before Calculus: The Human Side of Prioritization

Every source returned to the same theme: templates fail in opaque cultures. Publishing dashboards, documenting rationales, and linking items to OKRs reduces friction because people can see the “why,” not just the “what.” Teams that invite dissent during scoring sessions hear risks earlier, and decision quality improves without sacrificing speed.

Incentives also matter. When performance metrics reward shipping volume rather than outcome quality, people game the grid. Leaders who align incentives to impact—revenue lift, retention gains, cost reduction, risk mitigation—see healthier backlogs and fewer rushed detours.

The Role of AI and Automation: From Static Lists to Living Systems

Automation reshapes the day‑to‑day mechanics. When status flips to “Blocked,” automations can elevate the blocker’s priority, notify owners, and adjust sprint scope. When budgets change, dashboards recompute Value vs. Complexity and surface candidates for deferral. These real‑time reactions keep rankings aligned with reality, not last month’s plan.

AI extends that with foresight. Models trained on historical delivery data detect early signs of slippage, recommend resource reassignments based on skills and capacity, and simulate the ripple effects of moving a project up in the queue. Leaders using AI‑assisted portfolios report less firefighting and more deliberate trade‑offs, because the second‑order impacts are visible before commitment.

Tooling That Scales: How Teams Operationalize the Templates

Teams described success with platforms that mirror their chosen templates as configurable boards. Formula fields compute RICE or Value vs. Complexity, while Kanban and Gantt views turn priorities into movement. Comment threads attached to items preserve context around contentious scores. Executive dashboards roll up multiple boards, showing which initiatives are blocked, over capacity, or drifting from OKRs.

Agentic AI has begun automating routine decisions inside these systems. Rules like “never deprioritize Revenue‑Critical,” “escalate items stuck for 3 days,” or “reduce WIP on Musts above threshold” keep hygiene intact without constant human policing. The tools do not replace judgment; they enforce the agreements teams already made.

Measuring Outcomes: What Changes After Adoption

Organizations that lock in a template and cadence report tangible shifts. Cycle time drops as teams stop reshuffling work. Roadmap debates take half the time because criteria, not personalities, lead. Shadow projects shrink when intake is centralized. Post‑launch retros spotlight fewer “surprise” dependencies because prerequisites were mapped.

Perhaps most importantly, teams describe higher trust. Sales understands why a feature slipped. Compliance sees its nonnegotiables respected. Engineering feels protected from last‑minute scope creep. The template becomes a social contract, converting prioritization from a recurring conflict into a shared craft.

Common Pitfalls and How Experts Avoid Them

Three traps come up repeatedly. First, vague criteria. Remedy: publish thresholds and examples for every score. Second, spreadsheet rot. Remedy: shift to living boards with automated recalculation and alerts. Third, one‑size‑fits‑all zeal. Remedy: map templates to decision tiers and switch consciously when stakes or ambiguity change.

Leaders also warn against overfitting the model to last quarter’s anomalies. Regular calibration sessions, with a mix of optimists and skeptics, keep the system resilient. The goal is not perfect prediction; it is faster, fairer decisions under uncertainty.

Case Notes: How Teams Blend Templates

One consumer SaaS company runs Value vs. Complexity during quarterly planning to select investment themes, then uses RICE to rank initiatives within each theme. As projects near delivery, MoSCoW guards release scope, while squads use Effort vs. Impact to fill sprints with a mix of blockers, quick wins, and tech debt. Support uses the Eisenhower Matrix daily to triage incidents, feeding critical insights back into the roadmap.

A healthcare platform chose a different blend. Regulatory programs start in MoSCoW to protect compliance, portfolio leaders apply Value vs. Complexity to weigh expansion markets, and engineering overlays RICE for cross‑team work with measurable outcomes. The thread across both examples is intentional layering—each template has a job, and each handoff keeps context intact.

Conflict Resolution: When Departments Disagree

Sources recommend a three‑step protocol when priorities clash. First, return to shared criteriwalk through each score together and test the evidence. Second, expose dependencies: reveal what must shift elsewhere for a change to stick. Third, escalate to an executive forum that adjudicates using the same portfolio model rather than rank or urgency theatrics.

Because the framework is common, the conversation becomes a comparison of assumptions, not a contest of authority. The outcome is rarely perfect for any single team, but the decision lands faster and with less residual resentment.

Building Muscle Memory: Training, Rituals, and Reinforcement

Training turns templates from documents into reflexes. Teams that invest in hands‑on scoring labs, shadow sessions, and “scoring surgery” office hours shorten the adoption curve. Rituals like weekly backlog grooming, monthly portfolio reviews, and quarterly calibration cement the behavior. Leaders reinforce it by celebrating projects that were deprioritized for sound reasons, not only those that shipped.

Over time, the organization stops asking “which template is right” and starts asking “what is the right level of decision for this moment.” That shift signals maturity: prioritization becomes a capability rather than a meeting.

Data Hygiene: The Quiet Multiplier

Clean data amplifies every template. Analytics teams advise standardizing naming conventions, maintaining source‑of‑truth dashboards, and tagging items with OKR links, risk levels, and dependency IDs. Without this hygiene, RICE degenerates into guesswork and Value vs. Complexity drifts toward opinion.

Several leaders pair data checks with dashboards that display confidence intervals or freshness dates. If the Reach data is 90 days old, that caveat appears next to the score, prompting either a quick refresh or a conscious decision to proceed with uncertainty.

Signals That It’s Working

Practitioners look for leading indicators: fewer “urgent realignments,” steadier sprint commitments met, smaller and more intentional Must scopes, and trend lines that show recalculations happening without leadership prodding. Backlog aging curves flatten as low‑value items exit faster. Stakeholder satisfaction improves because visibility replaces guesswork.

Lagging indicators follow: better retention, cleaner release quality, higher forecast accuracy. None of these arise from templates alone, but the structure accelerates the learning cycles that produce them.

How AI Changes the Conversation About Complexity

Leaders increasingly treat Complexity as partly discoverable rather than purely estimated. AI models surface latent factors: overlapping integrations scheduled for the same week, skill mismatches that will slow onboarding, or policy updates that increase review time. This turns Complexity from a blunt input into a living risk register that updates as the environment shifts.

The most effective teams treat AI outputs as provocations, not verdicts. They invite human review, test “what if” scenarios, and use the insights to time resource swaps before slippage becomes visible on timelines. Complexity, once a guess, becomes a manageable dimension.

Practical Add‑Ons: Guardrails That Keep Priorities Honest

Veterans recommend three guardrails to preserve integrity. First, WIP caps per category, especially for Musts. Second, freeze windows, where changes require explicit approval to avoid thrash. Third, “explain‑the‑change” fields that capture the rationale any time a score moves. These light constraints produce heavy benefits: less churn, clearer accountability, and easier retros.

Some teams add “kill dates” for exploratory items that fail to reach threshold confidence by a set review. This protects capacity while still respecting the need to learn.

Making Space for Long‑Term Bets

Boards that only track this quarter’s wins foster myopia. Portfolio leaders advocate a dual‑track view: near‑term value captured by RICE or Effort vs. Impact, and mid‑to‑long‑term capability tracked with Value vs. Complexity. The former drives cash flow; the latter compounds advantage. Explicitly budgeting time and money for both reduces the whiplash of switching strategies with every headwind.

Metrics help here: publish a ratio target between maintenance, optimization, and transformation. When the ratio drifts, the dashboard asks “why” before a crisis forces the conversation.

A Note on Language: Aligning Around OKRs

Tying every item to an OKR cleans up ambiguous debates. When a task cannot map cleanly to an objective or key result, its priority faces heavier scrutiny. This linkage also makes dashboards more intuitive for executives who think in outcomes, not epics or tickets. Over time, OKR‑linked backlogs tell a coherent story about how strategy unfolds in work.

The payoff is compounding clarity. Teams stop shipping orphaned features and start sequencing work that moves the same needles in concert.

Final Takeaways From the Roundup

Across sources, three conclusions stood out. First, the “best” template is the one consistently used with clear definitions and visible rationale. Second, pairing methods by decision tier—Eisenhower and Effort vs. Impact for flow, RICE and MoSCoW for cross‑team bets, Value vs. Complexity for portfolio balance—reconciled speed with rigor. Third, automation and AI elevated prioritization from episodic to continuous, keeping rankings aligned with shifting facts rather than stale assumptions.

For next steps, leaders recommended piloting the template that fits the team’s current decision scale, codifying the six‑step rollout, and wiring dashboards that auto‑recompute scores and alert owners on change. Further reading should include deep dives on defining Impact bands, building dependency maps, and designing portfolio review forums that resolve conflicts using the same scoring language. This roundup closed with a simple point of agreement: prioritization worked best once it became a habit the organization practiced, not a spreadsheet the organization feared.